☆

1

Comments:

How exactly it performes comparing to http://papers-gamma.link/paper/199 ?

☆

1

Comments:

The paper, also accessible at https://academic.oup.com/nar/article/49/6/3139/6166853, accompanies the nullomers Database https://nullomers.org.

☆

1

Comments:

The combinatorial community is awesome!

On 6 Jan 2021, me and Jean-Luc Baril, we submitted to arXiv a paper

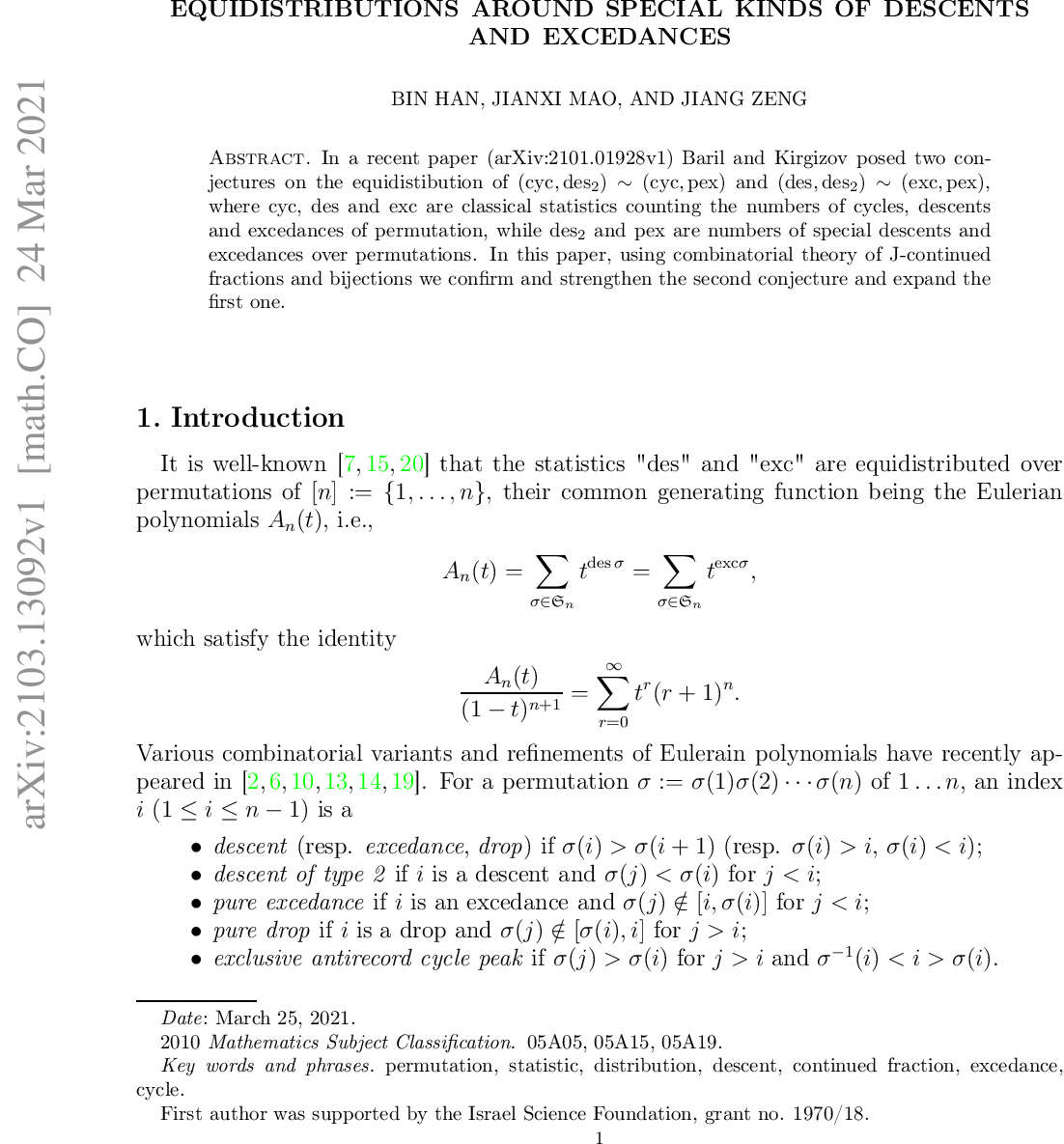

about a [transformation à la Foata for special kinds of descents and excedances in permutattions](https://arxiv.org/abs/2103.13092). At the end of this paper we present two conjectures that straighten our result.

On March 24, 2021, Bin Han, Jianxi Mao, Jiang Zeng anounced the proof of our second conjecture. The paper above presents their work. The first conjecture remains open.

Comments: