☆

1

Comments:

Nice paper along the work of [Dasgupta](https://papers-gamma.link/paper/155) and [Cohen-Addad et al.](https://arxiv.org/pdf/1704.02147.pdf).

A function to quantify the quality of a hierarchical graph clustering / dendrogram is proposed.

An interesting application to compress a dendrogram is proposed.

Section 8. If the input graph is a complete bipartite graph, then the quality function Q is maximum if the graph is partitioned in the two independent sets.

☆

0

Comments:

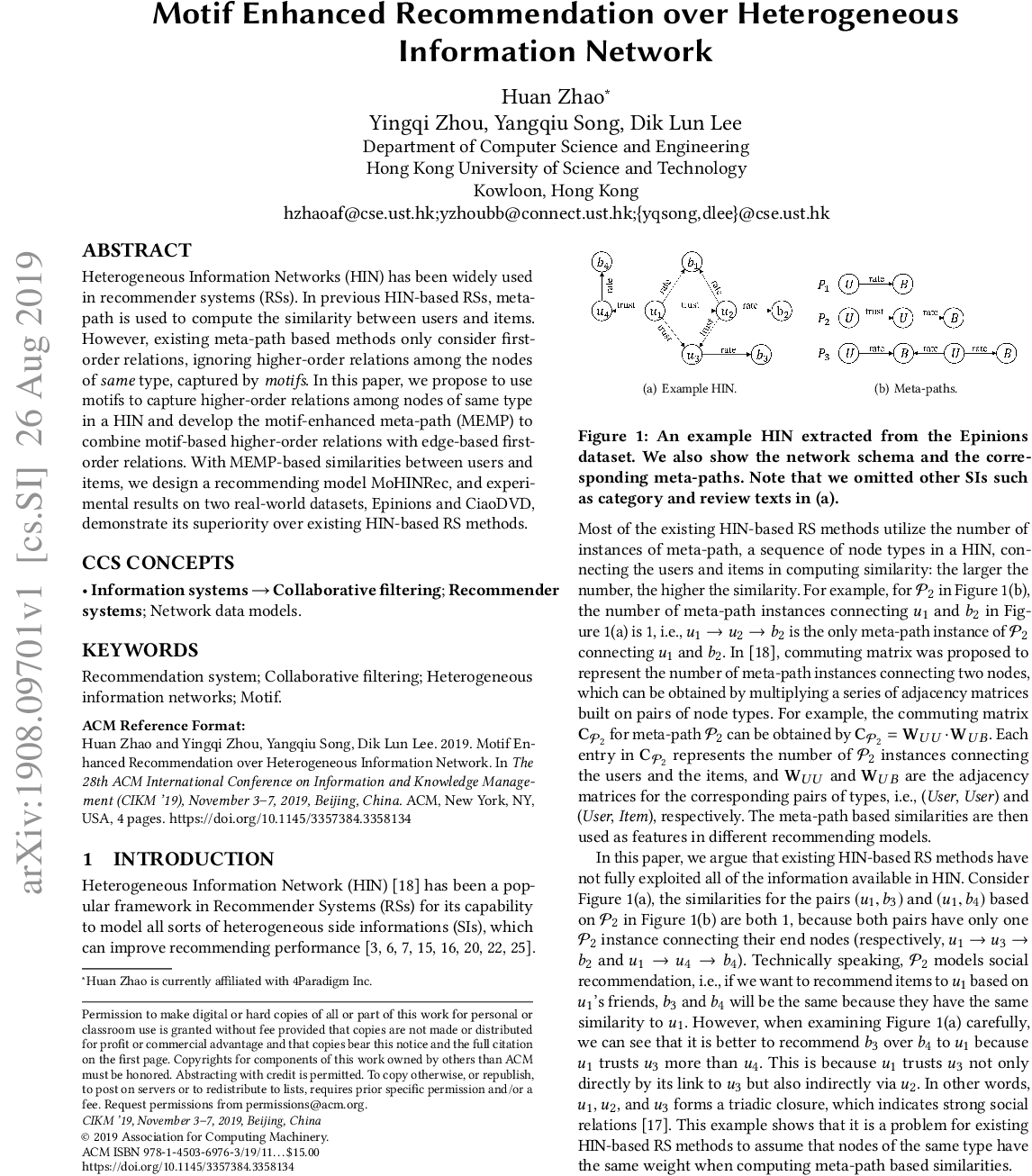

For a fast screening: Figure 1, Figure 4 and conclusion.

Can we use a hierarchical tree directly as input to machine learning algorithms instead of vectors?

Code:

- https://github.com/maxdan94/LouvainNE

- https://github.com/maxdan94/RandNE

Comments: