Wellcome to Open Reading Group's library,

You can find here all papers liked or uploaded by Open Reading Grouptogether with brief user bio and description of her/his academic activity.

### Upcoming readings:

No upcoming readings for now...

### Past Readings:

- 21/05/2018 [Graph Embedding Techniques, Applications, and Performance: A Survey](https://papers-gamma.link/paper/52)

- 14/05/2018 [A Fast and Provable Method for Estimating Clique Counts Using Turán’s Theorem](https://papers-gamma.link/paper/24)

- 07/05/2018 [VERSE: Versatile Graph Embeddings from Similarity Measures](https://papers-gamma.link/paper/48)

- 30/04/2018 [Hierarchical Clustering Beyond the Worst-Case](https://papers-gamma.link/paper/45)

- 16/04/2018 [Scalable Motif-aware Graph Clustering](https://papers-gamma.link/paper/18)

- 02/04/2018 [Practical Algorithms for Linear Boolean-width](https://papers-gamma.link/paper/40)

- 26/03/2018 [New Perspectives and Methods in Link Prediction](https://papers-gamma.link/paper/28/New%20Perspectives%20and%20Methods%20in%20Link%20Prediction)

- 19/03/2018 [In-Core Computation of Geometric Centralities with HyperBall: A Hundred Billion Nodes and Beyond](https://papers-gamma.link/paper/37)

- 12/03/2018 [Diversity is All You Need: Learning Skills without a Reward Function](https://papers-gamma.link/paper/36)

- 05/03/2018 [When Hashes Met Wedges: A Distributed Algorithm for Finding High Similarity Vectors](https://papers-gamma.link/paper/23)

- 26/02/2018 [Fast Approximation of Centrality](https://papers-gamma.link/paper/35/Fast%20Approximation%20of%20Centrality)

- 19/02/2018 [Indexing Public-Private Graphs](https://papers-gamma.link/paper/19/Indexing%20Public-Private%20Graphs)

- 12/02/2018 [On the uniform generation of random graphs with prescribed degree sequences](https://papers-gamma.link/paper/26/On%20the%20uniform%20generation%20of%20random%20graphs%20with%20prescribed%20d%20egree%20sequences)

- 05/02/2018 [Linear Additive Markov Processes](https://papers-gamma.link/paper/21/Linear%20Additive%20Markov%20Processes)

- 29/01/2018 [ESCAPE: Efficiently Counting All 5-Vertex Subgraphs](https://papers-gamma.link/paper/17/ESCAPE:%20Efficiently%20Counting%20All%205-Vertex%20Subgraphs)

- 22/01/2018 [The k-peak Decomposition: Mapping the Global Structure of Graphs](https://papers-gamma.link/paper/16/The%20k-peak%20Decomposition:%20Mapping%20the%20Global%20Structure%20of%20Graphs)

☆

2

Comments:

### Very nice application to graph classification / clustering:

Frequencies of motifs are graph's features that can be plugged in a Machine Learning algorithm in order to perform classification or clustering of a set of graphs.

### Very nice application to link prediction / recommendation:

page 8 "Trends in pattern counts". Some motifs are rarely observed as induced motifs. Thus, if such a motif is seen at time $t$ in a dynamic graph, then an edge between a pair of its vertices might be created (or be deleted) at time $t+1$.

Note that the framework only counts motifs without listing/enumerating them. Some adaptations are thus needed to deal with link prediction and recommendation.

### Application to graph generation:

Generate a random graph with a given number of each of the 21 possible 5-motifs.

### Some statements might be a little too strong:

- "As we see in our experiments, the counts of 5-vertex patterns are in the orders of billions to trillions, even for graphs with a few million edges. An enumeration algorithm is forced to touch each occurrence, and cannot terminate in a reasonable time."

- The framework is absolutely critical for exact counting, since enumeration is clearly infeasible even for graphs with a million edges. For instance, an autonomous systems graph with 11M edges has $10^{11}$ instances of pattern 17 (in Fig. 1)."

- "For example, the tech-as-skitter graph has 11M edges, but 2 trillion 5-cycles. Most 5-vertex pattern counts are upwards of many billions for most of the graphs (which have only 10M edges). Any algorithm that explicitly touches each such pattern is bound to fail."

The following C code generates a program that counts from 1 to one trillion. This program terminates within 30 minutes on a commodity machine (with -O3 option for the compiler optimization).

```c

main() {

unsigned long long i=0;

while (i < 1e12) {

i++;

}

}

```

This shows that "touching" billions or trillions of motifs might actually be possible in a reasonable amount of time.

### Minors:

- "Degeneracy style ordering" might be confusing as a degree ordering is used and not a degeneracy ordering (i.e. k-core decomposition).

- Figure 7: "The line is defined by...", should be "The prediction is defined by..." as the line is just "$y=x$".

### Typos:

- "they do not scale graphs of such sizes."

- "For example, the 6th 4-pattern in the four-clique and the 8th 5-pattern is the five-cycle."

- "and $O\big(n+m+T(G)$ storage."

- "An alternate (not equivalent) measure is the see the fraction of 4-cycle"

- "an automonous systems graph with"

### Others:

- https://oeis.org/A001349

- Is it possible to generalize the framework to any k?

A program for listing all k-motifs: https://github.com/maxdan94/kmotif

☆

1

Comments:

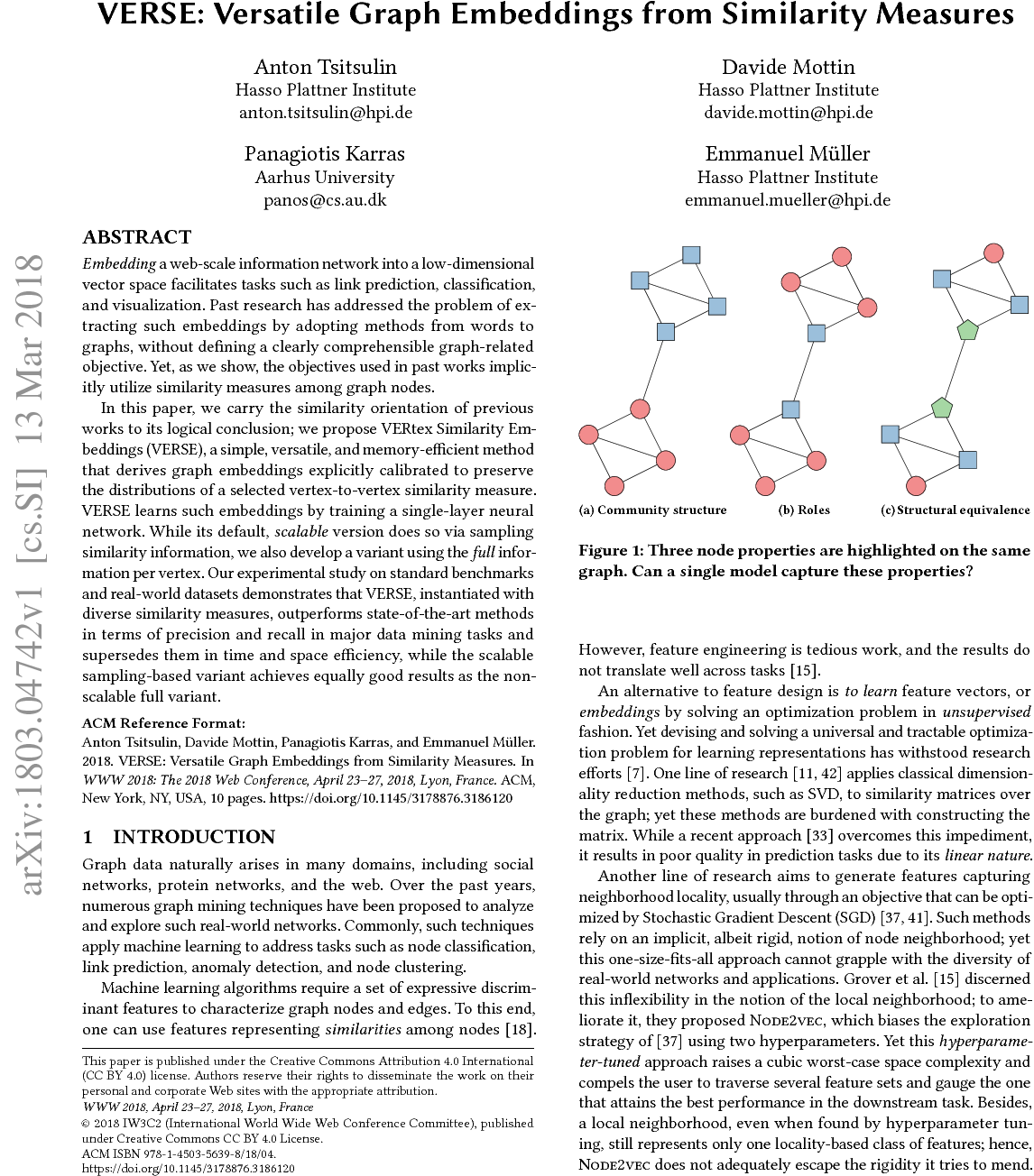

### Nice validation of the obtained embedding:

The obtained embedding seems useful for: (i) node clustering, (ii) link prediction and (iii) node classification.

### Concerns about the scalability of Louvain:

The Louvain algorithm can process Orkut in a few seconds on a commodity machine, contrary to what Table 2 suggests. The obtained modularity is of 0.68 such as written in Table 3 (the table presenting the dataset). The value of 0.68 is much more than the modularity obtained thanks to embeddings and k-means.

### Table 2 reporting the average and worst case running time is confusing:

In Table 2, $\Theta$ is used to denote the average running time and $O$ is used to denote the worst case running time. This is very confusing as worst-case running time can be given in terms of big-oh or theta.

Definitions are given here: https://en.wikipedia.org/wiki/Big_O_notation

It is also not clear how these asymptotic complexities were obtained, in particular, the asymptotic time and memory complexities of the suggested algorithm are not proven.

### VERSE Embedding Model

"We define the unnormalized distance between two nodes u,v in the embedding space as the dot product of their embeddings Wu·W⊤v. The similarity distribution in the embedded space is then normalized with softmax."

What is the intuition behind the softmax?

> Perhaps, an interesting research direction would be something like embedding-guided modularity optimization.

Maybe using the modularity-matrix (https://en.wikipedia.org/wiki/Modularity_(networks)#Matrix_formulation) as the similarity matrix used in VERSE?

This matrix can have negative entries though...

Hi!

> If you see the matrix $W$ used in LINE as the similarity matrix you use in VERSE, then yes I think that what I have suggested is similar to LINE. So VERSE can be seen as using LINE on the input similarity matrix? Is that correct?

VERSE can be seen as going beyond this ;)

If we just plug similarities into LINE training objective, we observe that (Fig. 3, NS line) the objective does not recover similarities at all! This is very surprising, and suggests that current embedding methods such as node2vec or LINE do not optimize the actual objective they claim to optimize. When I discovered that, I thought this is just a bug in the code, so I have made a version of VERSE that optimizes the random walk based similarity from DeepWalk. It turned out that DeepWalk does not preserve the similarity ranking of these walks.

> I've missed that. You mean that in LINE, "negative sampling" is used for the optimization; while in VERSE, "Noise Contrastive Estimation" is used?

Yes! To overcome the aforementioned problem, we have proposed an NCE-based sampling solution. It seems to work much better than plain negative sampling, and show that it is close to the full neural factorization of the matrix (which is only possible for VERSE model)!

>

>> Perhaps, an interesting research direction would be something like embedding-guided modularity optimization.

>

> Maybe using the modularity-matrix (https://en.wikipedia.org/wiki/Modularity_(networks)#Matrix_formulation) as the similarity matrix used in VERSE?

> This matrix can have negative entries though...

Yes, modularity matrix would probably not suit the algorithm well, as it is not probabilistic per node. Maybe some reformulation would help, I am not aware of any to this day.

Comments: